Shadow AI on the Rise

From Hidden Risk to Strategic Asset

Remember when employees secretly installed Dropbox because IT's file-sharing solution was stuck in 2003? Well, history's repeating itself with AI. The explosion of generative AI tools like ChatGPT has birthed "Shadow AI" – the unsanctioned use of AI in workplaces.

Your employees are already using it. Yes, even Brian from marketing.

Recent surveys reveal a stark reality: while professionals enthusiastically adopt AI tools, most organizations scramble to keep up. The challenge? How to secure and govern AI usage without becoming the fun police.

The Underground AI Economy

The statistics tell a compelling story. By mid-2023, 57% of American workers had tried ChatGPT, with marketing departments leading the charge at 37% adoption. A Salesforce survey found that over half of AI users operate without formal approval.

What started as experimentation has become essential. By early 2024, McKinsey reported 91% of employees use generative AI for work tasks. Within months of ChatGPT's release, 80% of Fortune 500 companies had employees using it.

Executives aren't immune. Upper managers are 3× more likely to use ChatGPT than junior staff. They're using AI for everything from writing emails and reports to debugging code and drafting marketing copy. Financial professionals digest lengthy documents, HR teams generate job descriptions, and everyone's trying to escape email drudgery.

Yet organizational response lags dramatically. Only 17% of U.S. workers report clear AI policies from their employers. Nearly 70% have never received training on safe AI use. This policy vacuum creates an underground AI economy where employees hide their usage from management.

When Fear Drives Policy

Faced with this reality, many companies panicked. In 2023, major corporations including Accenture, Amazon, Apple, Bank of America, and Samsung moved to restrict ChatGPT access. Two-thirds of top pharmaceutical companies joined the ban wagon.

How'd that work out? About as well as Prohibition.

Studies indicate shadow AI flourishes when organizations simply prohibit it without offering alternatives. Employees find workarounds, use personal devices, or simply ignore policies. Smart companies are shifting from "Just Say No" to "Here's How to Do It Right."

The Real Risks of Shadow AI

The security concerns driving these bans are legitimate. When employees paste company information into public AI tools, that data might be stored or used to train models.

Samsung learned this lesson painfully in 2023. Three separate incidents saw engineers paste proprietary source code and internal meeting transcripts into ChatGPT. Samsung's response? Emergency measures, prompt size limits, and a temporary ChatGPT ban.

They weren't alone. A UK survey found 1 in 5 organizations had employees expose company data via generative AI. JPMorgan and other banks blocked ChatGPT after compliance flagged the risk.

Beyond data leaks lurk compliance nightmares. Healthcare workers inputting patient information violate HIPAA since ChatGPT is not HIPAA-compliant. FINRA reminds financial firms that AI doesn't exempt them from recordkeeping rules. The U.S. Patent Office banned public AI tools pending security review.

Under GDPR, sending EU personal data to external AI could be unlawful. The upcoming EU AI Act mandates transparency for high-risk uses. No wonder 87% of healthcare workers say their employers lack clear AI policies.

Building Bridges, Not Barriers

The solution isn't prohibition but provision. Organizations need secure pathways that satisfy both employee needs and security requirements. This is where modern AI governance infrastructure becomes essential.

Enterprise AI Platforms

Major cloud providers offer enterprise solutions: Azure's OpenAI Service, Google's Vertex AI, and Amazon Bedrock provide isolated instances where your data stays yours. For complete control, open-source models like Llama 4 or Deepseek can run on-premises.

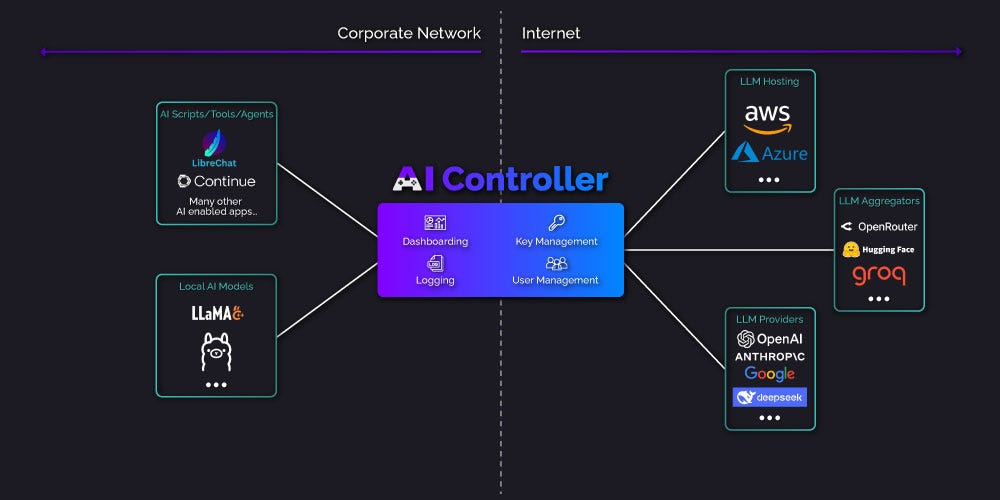

The Gateway Approach

AI gateways act as intelligent intermediaries between users and AI services. They provide centralized control while maintaining the user experience employees expect. These systems log interactions, manage API keys centrally, implement role-based access, and even cache responses to reduce costs.

Modern gateways like AI Controller exemplify this approach. With OpenAI-compatible APIs, existing tools work without modification. Support for multiple LLM providers prevents vendor lock-in. Most importantly, comprehensive logging and access controls satisfy security requirements while intelligent caching can reduce API costs by 30% or more.

Learning from Early Adopters

Morgan Stanley: Turning Shadow into Strategy

When Morgan Stanley discovered wealth advisors secretly using ChatGPT for research, they didn't ban it. Instead, they partnered with OpenAI to build a secure GPT-4 chatbot trained on internal knowledge.

The result? 16,000 advisors gained instant access to firm insights. Jeff McMillan described it as "having our Chief Investment Strategist, Chief Economist, and every analyst on call 24/7". By late 2023, nearly 50% of employees had access. Shadow AI disappeared because the official solution was better.

PwC: Scale Matters

PwC took a different approach, deploying ChatGPT Enterprise to 100,000+ employees and becoming OpenAI's largest enterprise customer. With robust governance, training, and controls, they turned user enthusiasm into competitive advantage.

The Hybrid Success Story

A mid-sized manufacturer discovered engineers using ChatGPT for technical documentation. Their solution combined an on-premises LLM for sensitive work with monitored public AI access through a gateway. When engineers complained the internal model wasn't as capable, they listened and gradually enabled GPT-4 access with proper controls.

The gateway's analytics revealed usage patterns that helped optimize both security and productivity. By meeting users where they were rather than forcing inferior alternatives, they achieved both compliance and adoption.

The Cost-Security Balance

The financial equation varies by approach:

Public AI Services: Pay-per-use model. Running 20 million words through GPT-3.5 costs under $100, while GPT-4 runs about $30 per million tokens.

Self-Hosting: Higher upfront investment but potentially lower per-token costs. Analysis shows serving an 8B-parameter model on AWS could process 1 million tokens for $0.42.

Gateway Solutions: Optimize through intelligent caching. By storing common responses, gateways can dramatically reduce API calls and costs while maintaining security oversight.

The return justifies investment. 56% of enterprises report generative AI boosts productivity by over 50%. That ROI changes the conversation from cost to competitive necessity.

Practical Governance Framework

Success requires clear, practical governance:

1. Create Clear Policies

Publish an AI Acceptable Use Policy covering approved tools, data handling rules, output verification requirements, and support contacts. Make it practical, not punitive.

2. Establish Oversight

Form an AI Governance Committee with representation from IT, security, legal, and business units. McKinsey found 91% of successful adopters had governance structures in place.

3. Implement Technical Controls

Deploy secure AI access through enterprise platforms or gateways

Implement usage monitoring and cost controls

4. Train Everyone

Close the 70% training gap immediately. Teach data classification, output verification, and approved workflows.

5. Monitor and Evolve

Track usage patterns, review incidents, and update policies regularly. AI evolves rapidly; your governance must too.

Your Action Plan

Shadow AI proves that innovation waits for no one. Your employees have voted with their keyboards: AI delivers value. The choice isn't whether to allow AI but how to enable it securely.

Start Today:

Accept Reality: Skip the ban. Provide sanctioned AI environments that meet user needs.

Deploy Infrastructure: Evaluate AI gateways, enterprise platforms, or hybrid solutions. For organizations seeking rapid deployment with comprehensive controls, solutions like AI Controller offer centralized management, complete audit trails, and multi-provider support with minimal setup.

Communicate Clearly: Draft that AI use policy now. Even basic guidelines beat confusion.

Lead Visibly: When executives use AI properly, it sets the tone. Make AI a celebrated productivity tool, not a guilty secret.

The past two years proved that governed AI adoption doesn't just work; it thrives. Organizations that provide secure, monitored AI access see productivity gains while maintaining security. Those that rely on bans watch shadow AI proliferate underground.

Your employees want to work smarter. It's time to let them do it safely. Turn Shadow AI from lurking risk into competitive advantage. The tools exist, the frameworks are proven, and the benefits are real.

The only question is: will you lead the change or chase it?

Ready to transform Shadow AI into sanctioned productivity? AI Controller offers the security, visibility, and control you need without sacrificing the AI capabilities your employees demand. Start your free 30-day trial at

AI was released all willy nilly. If the internet were governed by a world organization, it would be far safer and your plan would have been implemented before AI was released. Then we wouldn't be in this situation to begin with.

With all the big brains in this world, I'm unsure why a world internet organization hasn't been already formed.