Judging the Judges: Can Machines Really Remove Bias from the Justice System?

Why automated risk assessment tools are not the silver bullet solution to discrimination in the courts

The memory is as crisp as the morning air was on that particular day. I found myself amidst the hum of hushed conversations in a courtroom, a place where the stark contrast of worn wooden benches against the solemnity of the judge’s bench seemed to embody the tension between the everyday man and the system. The aroma of stale coffee hung in the air, mingling with the musky scent of aged books and wooden panels. The room was a blend of muted colors, a canvas painted in shades of brown and grey, interrupted only by the dark robes of justice that swayed gently with the rhythm of law.

The case at hand involved a young man, Juan, a Latino in his late twenties with a visage that bore the innocence of youth yet the furrows of worry common to those who find themselves at the mercy of the gavel. His dark eyes scanned the room, perhaps seeking a semblance of hope amidst the stern faces that surrounded him. The judge, a figure of formidable authority with a hawkish nose and eyes that seemed to pierce through the veil of courtroom formalities, was about to set the bail. The crime was petty theft, a minor transgression in the grand scheme of legal misdemeanors. Ordinarily, a reasonable bail would allow Juan to await his hearing in the realm of freedom. However, as the gavel struck, sealing the figure that was much higher than expected, a hushed gasp rippled through the room. Juan’s face paled, the reality of his situation hitting him like a cold wave. His lawyer, a spirited young woman, fervently argued for a lesser sum, emphasizing his clean record bar this single accusation. But the judge remained unmoved, his face a mask of judicial stoicism.

It was in this moment of unfairness that a nagging question struck me: had the scales of justice tilted unfavorably for Juan due to an inherent bias?

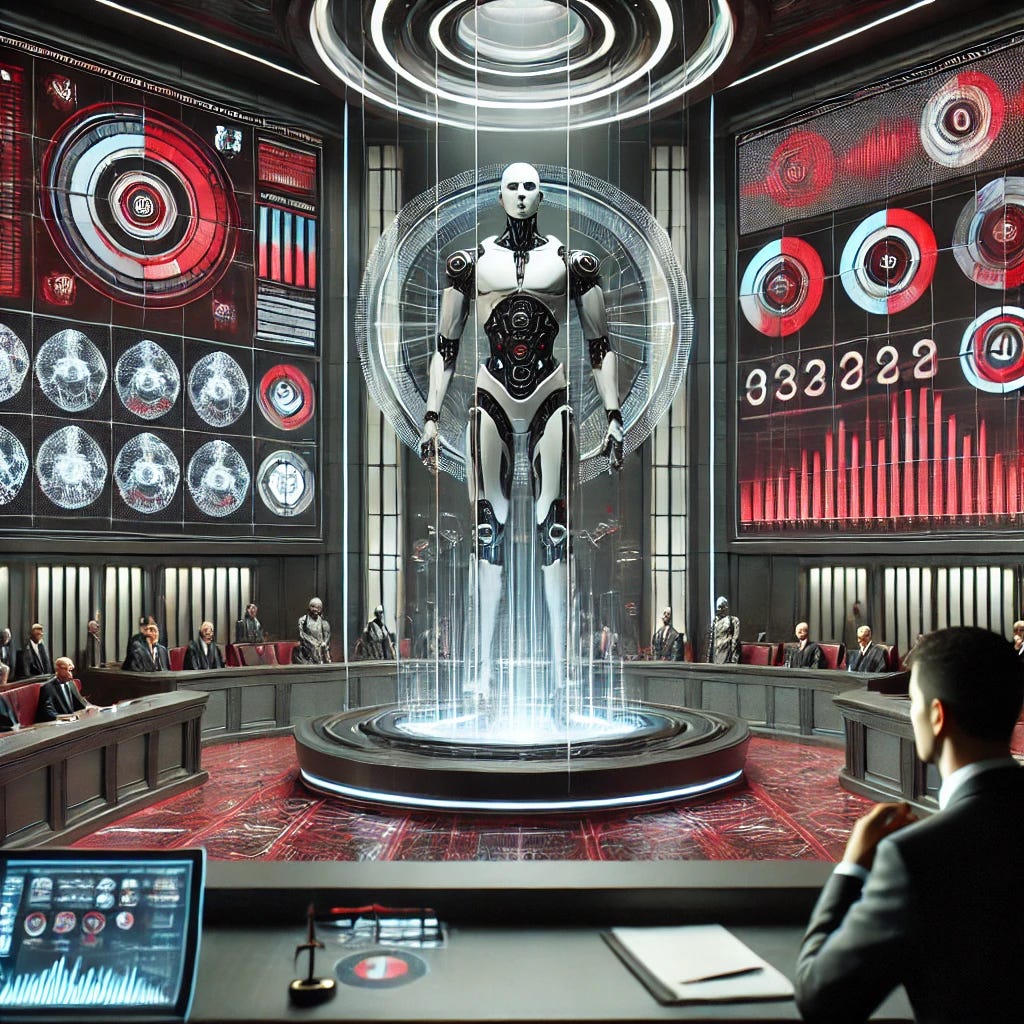

The courtroom experience is an eye-opener, unveiling the subtle yet significant biases that lurk within the folds of judicial discretion. This opens up a vista of contemplation regarding the role of technology as a potential equalizer in the realm of justice. Automated risk assessment tools emerge on the horizon, promising a dawn of unbiased judgement. These digital aides, armed with algorithms and data analytics, vow to assist judges in making more impartial decisions by offering statistical insights based on factors like criminal history, age, and employment status. The underlying premise is simple yet profound - machines, devoid of human prejudices, could provide a balanced, data-driven perspective to the judges, helping to dissolve the age-old shackles of racial and demographic biases.

However, navigating through the maze of legal algorithms, it becomes evident that while these tools shimmer with the promise of reducing bias, they also harbor critical limitations that we cannot afford to ignore. While automated risk assessment tools promise to reduce bias in judicial decisions through data-driven analysis, a closer look reveals limitations we cannot ignore if these tools are to fulfill their purpose. A nuanced view is needed, rather than seeing them as a cure-all solution. The narrative of them being the magical solution to a deeply ingrained problem is a facade that requires peeling. It requires a deeper, more judicious analysis to ensure that in our quest for fairness, we do not inadvertently perpetuate the very biases we aim to eradicate. As we inch towards a future intertwined with technology, the call for a balanced examination of these tools is vital.

Background

The call for justice has resonated through the ages, echoing the collective aspiration for fairness and equity. In recent times, the spotlight has turned towards racial disparities entrenched in our criminal justice system. The statistics are telling: minorities face incarceration at rates disproportionate to their population. Each controversial judgment or perceived skewed verdict magnifies the simmering discontent, leading to waves of public outrage and demands for reform. The plea is clear - a system embodying transparency and accountability in every verdict rendered.

Judicial discretion lies at the heart of this conundrum. It's a principle that allows judges the latitude to make decisions on matters such as bail, pretrial detention, or sentencing. While necessary for personalized justice, this discretionary power also becomes a gateway for implicit biases to influence judgments. For instance, the setting of bail can become a scenario where economic and racial biases subtly tip the scales. Research shows how these biases, often unconscious, can influence the judgments rendered, yet the umbrella of discretion often shields these biases from scrutiny.

In the face of rising skepticism, the inception of automated risk assessment tools emerges as a potential solution. These algorithmic aids, designed to dissect factors like criminal history, demographics, and social determinants, aim to provide judges with data-driven insights. The idea is simple: temper the human element of judicial decision-making with data-driven objectivity. The narrative of algorithmic fairness has captured the attention of many states and local jurisdictions, leading to a broader adoption of these digital tools.

The underlying rationale is that algorithms, bereft of emotions or prejudices, can serve as impartial arbiters. The statistical analysis, they perform is seen as a step towards mitigating biases, offering a level of consistency that human discretion often lacks. Risk scores, derived from these tools, are viewed as a means to infuse a dose of impartiality into the justice system. The idea of a courtroom where judgments are informed by neutral data and not tainted by human biases holds a promise of a more equitable justice system.

However, as we navigate digital transformation within the justice system, a pause to scrutinize the efficacy and integrity of these tools is essential. The arena of justice is complex, and while the allure of algorithmic fairness is tempting, a thorough examination of its potential and limitations is crucial as we chart the course towards judicial reform.

Where current tools fall short

The promise of automated risk assessment tools hinges on their ability to sidestep human bias by grounding decisions in data. However, upon closer inspection, the supposed neutrality of these tools begins to fray at the edges. The core issue lies in the nature of the arrest data used to train these models.

The data these tools rely on is a direct outcome of human actions, specifically those of police officers deciding whom to surveil, stop, or arrest. Models draw from data like prior arrests to make predictions about future criminal behavior. Yet, this arrest data often mirrors the discretion and biases of the police force. The biases in whom they choose to surveil or arrest are encoded in the data, becoming the bedrock on which these risk assessment models are built. The biases in arrest data are not anomalies but systemic issues ingrained in societal prejudices and disparities.

A central fallacy underpins the deployment of these tools: the belief that automation equates to impartiality. In truth, algorithms excel at identifying patterns in the data they are trained on. When fed biased data, they learn and replicate these biases, thereby automating rather than eradicating them. This is a key point that underscores the limitations of current tools.

Real-world data often shines a spotlight on the biases inherent in arrest data. Studies have shown significant racial disparities in stops and arrests, with certain demographics disproportionately affected. This biased scrutiny leads to a higher number of arrests in these communities, which in turn, skews the data that trains these models. Similarly, booking charges, often more severe for minorities, are used to feed the algorithmic assessment, perpetuating the cycle of bias.

A critical question emerges: Are these tools merely shifting the bias from judges to police? The aim to replace judicial bias with data-driven objectivity hits a snag when the data itself mirrors police bias. The cycle of biases appears to shift upstream, from the courtroom to the streets.

The issue extends to the use of booking charges as an input for risk scores. These charges are often influenced by officer discretion, which is not free of bias. By using booking charges as a metric, we open another channel for upstream biases to influence the tools designed to bolster judicial objectivity.

The narrative of enhancing fairness in judicial processes through automation faces a stern reality check. The revelation that these tools might be automating existing biases instead of alleviating them underscores a pressing need. It beckons a thorough examination and a willingness to address the fundamental flaws that threaten to derail the quest for a fair and equitable justice system.

Progress, with caveats

The sucess of automated risk assessment tools in judicial settings hinges on the potential to temper human biases with data-driven insights. The anticipation of a swift remedy must be weighed against the stark realities these tools present.

The surface-level appeal of data-driven decisions is understandable, yet the mechanics of these tools reveals a tangle of biases that could potentially perpetuate, if not exacerbate, existing disparities. While the notion of infusing objective data into judicial processes is tempting, the path is fraught with significant caveats that demand a sober appraisal.

The crux of the issue extends beyond mere technical fixes and ventures into the realm of systemic reform. The biased data that feeds these tools is but a symptom of larger, entrenched issues within our policing and judicial systems. Without addressing these root causes, the prospect of achieving a truly equitable justice system remains distant. More equitable policing practices, a reevaluation of flawed laws and policies, and the collection of better, unbiased training data are imperative to foster a fair judicial landscape.

The deployment of these tools carries with it risk, notably the potential to automate and scale biases under the guise of objectivity. The rush to adopt such tools without a thorough understanding of their implications is a precarious venture. Independent validation and ongoing audits are critical to ensure these tools do not stray from their intended goal of promoting fairness. Moreover, a more forceful call for moratoriums on the use of these tools until proper oversight and validation mechanisms are in place is warranted.

The narrative surrounding automated risk assessment tools often teeters on the edge of over-optimism. However, the stakes are too high to allow for a premature or ill-advised deployment. A cautious, evidence-based approach is imperative. However, the scales of justice remain tipped, awaiting a thorough and informed approach to untangle the complex interplay of technology and human bias.

What could the future look like?

The hurdles that lie ahead are neither few nor trivial. The quest for fairness, a term that's easily tossed around, yet laden with myriad interpretations and open debates, remains at the heart of this endeavor.

Improving data collection is a crucial step, yet a task easier said than done. It calls for stricter protocols and oversight surrounding arrests, which in turn demands a concerted effort from various stakeholders within the justice ecosystem. Expanding the types of data collected and instilling controls to curtail discretionary biases are imperative. Yet, it’s the community involvement in process reforms that could be a linchpin for fostering public trust and ensuring the reforms are grounded in the realities of those they impact.

The technological frontier is buzzing with efforts to identify and mitigate biases in training data. Algorithms capable of detecting biases and techniques to adjust models offer a glimpse of what might be on the horizon. Yet, these technical solutions are merely one piece of a multifaceted puzzle. The notion of fairness that underpins these efforts is a complex, often contentious one, with various stakeholders holding divergent views on what constitutes fairness in the realm of justice.

Hybrid human-AI systems herald a promise of marrying the best of both worlds. They propose a setup where risk scores serve merely as guiding lights, with human judges holding the reins of final decision-making. This model preserves human oversight, a crucial aspect for ensuring accountability and safeguarding the rights of individuals. However, this hybrid model isn’t without its own set of challenges. It raises pertinent questions around transparency and accountability, demanding clear frameworks that delineate the roles and responsibilities of both the human and algorithmic actors within the system.

Community oversight and involvement in the reform efforts stand as a bedrock for nurturing public trust. Engaging communities in the dialogues surrounding the deployment of these tools, and ensuring their voices are heard and considered in the reform process is crucial. It’s a step towards a more inclusive, democratic approach to justice reform.

The vision of a more balanced justice system, bolstered by the prudent use of automated tools, is both compelling and complex. The challenges that lay ahead are substantial and demand a level of rigor, scrutiny, and inclusivity that goes beyond mere technological fixes. The future is fraught with debates, learnings, and hopefully, iterative progress towards a justice system that stands true to the ideals of fairness and equity.

Conclusion

The discourse surrounding automated risk assessment tools oscillates between cautious optimism and a clear-eyed acknowledgment of the hurdles on the horizon. A more objective, data-driven approach to justice is required, yet the pathway to its realization is strewn with challenges that demand meticulous attention.

These tools, in their nascent stage, are not the silver bullet for the biases that pervade our justice system. The potential to augment justice decisions through a careful amalgam of data and human judgement is compelling. However, the biases ingrained in the data that fuels these tools cast a shadow on their readiness for broad adoption. Before these tools can truly live up to their promise, the hurdles must be addressed head-on with unwavering commitment.

The trajectory towards a more evolved criminal justice system, underpinned by the prudent use of automated tools, isn't devoid of hope. There lies a challenging yet possible roadmap to develop these tools responsibly, mitigate biases, and edge closer to a system emblematic of fairness and equity. However, traversing this roadmap demands rigorous scrutiny, a relentless willingness to iterate, and a grounded approach that neither shies away from the challenges nor gets swept away in over-optimism.

Now, as we stand at the threshold of a new era, the mantra should be to "judge the judges" as well as the machines that aim to augment or undermine their decisions. This line of thought fosters a healthy skepticism and underscores the imperative to meticulously scrutinize both human and algorithmic biases. The quest for a more equitable justice system is a collective endeavor that requires vigilance in overseeing the entire ecosystem. Our voyage towards a fairer justice system has only just set sail, and though the waters ahead are turbulent, the pursuit is worthy of our highest aspirations and unyielding resolve.

AI can help identify patterns. But AI itself is mathematically endocded bias distilling large data into weighted buckets and then analyzing against logical structures. Only by recognizing the organizational bias, the data bias, and the bias of the algorithms themselves can we even start to have a conversation of which way we'd like our legal system to bias results.

I attempted to capture some of the implictions of the layers of bias last fall because so many of these conversations miss it's a multivariate and multidimensional problem.

https://www.polymathicbeing.com/p/eliminating-bias-in-aiml